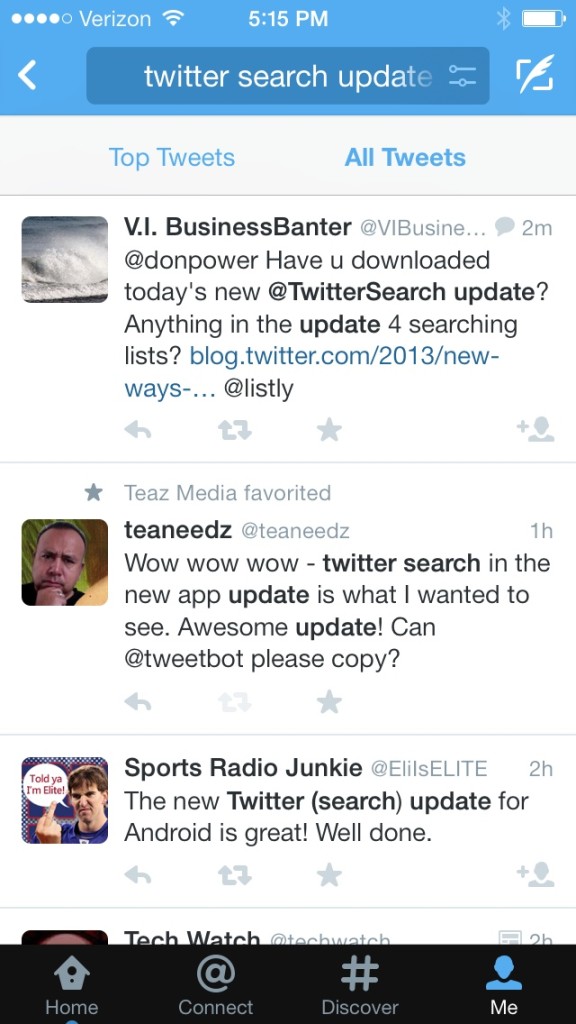

We chose Netty over some of its other competitors, like Mina and Jetty, because it has a cleaner API, better documentation and, more importantly, because several other projects at Twitter are using this framework. Twitter Search Architecture with Blender BLENDER OVERVIEWīlender is a Thrift and HTTP service built on Netty, a highly-scalable NIO client server library written in Java that enables the development of a variety of protocol servers and clients quickly and easily. Finally, results from the services are merged and rendered in the appropriate language for the client. Blender parses the query and then issues it to back-end services, using workflows to handle dependencies between the services.

Queries from the website, API, or internal clients at Twitter are issued to Blender via a hardware load balancer. The following diagram shows the architecture of Twitter’s search engine.

Workflows automatically handle transitive dependencies between back-end services. Elegantly dealing with dependencies between services.Aggregating results from back-end services, for example, the real-time, top tweet, and geo indices.No thread waits on network I/O to complete. Creating a fully asynchronous aggregation service.Over time, we had also accrued significant technical debt in our Ruby code base, making it hard to add features and improve the reliability of our search engine. We have long known that the model of synchronous request processing uses our CPUs inefficiently. The front ends ran a fixed number of single-threaded rails worker processes, each of which did the following: In order to understand the performance gains, you must first understand the inefficiencies of our former Ruby-on-Rails front-end servers. This means we can support the same number of requests with fewer servers, reducing our front-end service costs.ĩ5th Percentile Search API Latencies Before and After Blender Launch TWITTER’S IMPROVED SEARCH ARCHITECTURE We now have the capacity to serve 10x the number of requests per machine.

Following the launch of Blender, our 95th percentile latencies were reduced by 3x from 800ms to 250ms and CPU load on our front-end servers was cut in half. The week before we deployed Blender, the #tsunami in Japan contributed to a significant increase in query load and a related spike in search latencies.

Twitter search is one of the most heavily-trafficked search engines in the world, serving over one billion queries per day. We are pleased to announce that this change has produced a 3x drop in search latencies and will enable us to rapidly iterate on search features in the coming months. Last week, we launched a replacement for our Ruby-on-Rails front-end: a Java server we call Blender. As part of the effort, we launched a new real-time search engine, changing our back-end from MySQL to a real-time version of Lucene. In the spring of 2010, the search team at Twitter started to rewrite our search engine in order to serve our ever-growing traffic, improve the end-user latency and availability of our service, and enable rapid development of new search features.

0 Comments

Leave a Reply. |

RSS Feed

RSS Feed